BEAT: Tokenizing and Generating Symbolic Music by Uniform Temporal Steps

Published in ICML, 2026

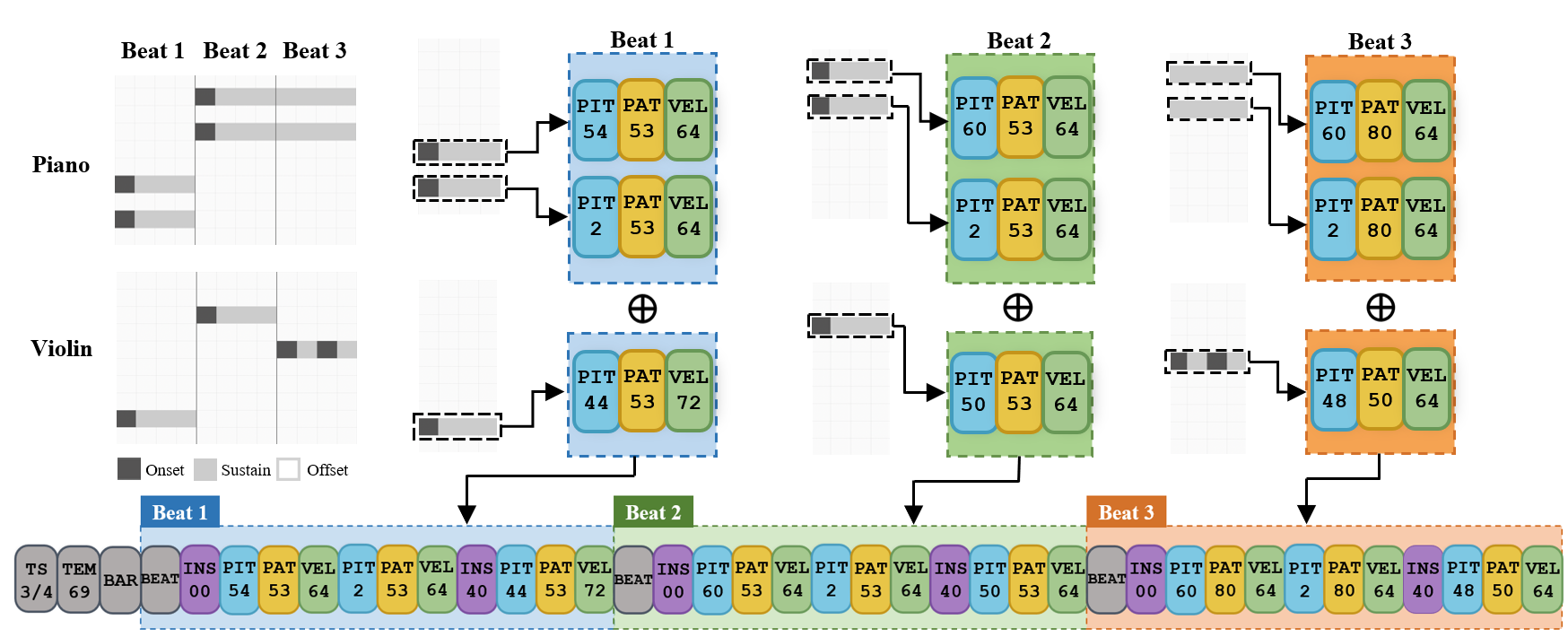

Overview of the BEAT tokenization: notes are re-expressed as temporal states (onset/sustain/rest) and serialized beat by beat, enumerating only active pitches within each beat.

Abstract

Event-based representations have driven rapid progress in symbolic music generation, yet they implicitly treat music as a temporal point process — events arriving sequentially at discrete time points. This view captures only part of the picture: notes extend in time, overlap, and coexist, especially in polyphonic music. We argue for a fundamental shift from event-centric to time-centric organization, where representations answer “what happens at this moment?” rather than “when does this note occur?”.

Time-centric organization yields two structural advantages:

| Advantage | Description |

|---|---|

| Temporal relativity | Rhythmic patterns are learned once and generalize across absolute positions |

| Precise temporal control | Sequence position corresponds directly to musical time |

The piano-roll embodies this perspective but cannot be directly serialized — it is dense along time yet sparse along pitch. To bridge this gap, we propose BEAT (Beat-aligned Enumeration of Active Tones), which re-expresses notes as temporal states (onset/sustain/rest) and encodes music beat by beat, enumerating only active pitches within each beat.

BEAT achieves state-of-the-art performance on piano and multi-track continuation, exhibits stronger sequence regularity under BPE compression, and outperforms event-based baselines on probing tasks testing temporal structure learning. A real-time accompaniment task further demonstrates precise temporal control — a capability structurally inaccessible to event-based representations.